-

Paper Information

- Paper Submission

-

Journal Information

- About This Journal

- Editorial Board

- Current Issue

- Archive

- Author Guidelines

- Contact Us

Journal of Safety Engineering

p-ISSN: 2325-0003 e-ISSN: 2325-0011

2017; 6(2): 15-28

doi:10.5923/j.safety.20170602.01

Construction Safety Management Systems and Methods of Safety Performance Measurement: A Review

Elyas Jazayeri, Gabriel B. Dadi

Department of Civil Engineering, Univ. of Kentucky, Lexington, USA

Correspondence to: Elyas Jazayeri, Department of Civil Engineering, Univ. of Kentucky, Lexington, USA.

| Email: |  |

Copyright © 2017 Scientific & Academic Publishing. All Rights Reserved.

This work is licensed under the Creative Commons Attribution International License (CC BY).

http://creativecommons.org/licenses/by/4.0/

The construction industry experiences high injury and fatality rates and is far from achieving a zero-injury goal. Thus, effective safety management systems are critical to ongoing efforts to improve safety. An appropriate definition of safety management systems is required, and the elements included in a safety management system should be identified to be used by practitioners to improve safety. A variety of safety management systems have been introduced by researchers and organizations. Most have been developed based on the root causes of injuries and fatalities within organizations. This review paper gives an overview of different safety management systems in various fields to identify the similarities and differences in these systems. The aim of this review is to demonstrate the state-of-the art research on a variety of safety management systems and related methods of measurement. This paper also provides background studies of developing safety management systems. The primary contribution of this review to the body of knowledge is providing insights into existing safety management systems and their critical elements with appropriate methods of safety performance measurement, which can enable owners, contractors, and decision makers to choose and implement elements.

Keywords: Safety Management, System, SMS, Construction Industry, Safety Performance, Measurement

Cite this paper: Elyas Jazayeri, Gabriel B. Dadi, Construction Safety Management Systems and Methods of Safety Performance Measurement: A Review, Journal of Safety Engineering, Vol. 6 No. 2, 2017, pp. 15-28. doi: 10.5923/j.safety.20170602.01.

Article Outline

1. Introduction

- The construction industry is one of the most hazardous industries (Edwards & Nicholas, 2002). Despite the significant improvement since the Occupational Safety and Health Act of 1970, workers still experience high injury and fatality rates in comparison to other industries (Bureau of Labor Statistics, 2011). According to the Bureau of Labor Statistics (BLS), in 2012 alone, the construction industry experienced 856 fatalities, and accounted for 19% of all fatalities among all industries (BLS, 2013). There are more than 60,000 fatalities reported every year in the construction industry around the world (Lingard, 2013). In the United States, the number of fatal injuries in construction increased by 16% from 2011 to 2014 (BLS, 2015). According to Zhou et al. (2015), the construction industry is far from reaching the goal of zero injuries. 2014 was a particularly dangerous year. Fatalities increased 5% (or 40 individuals) to 885, which is the highest number since 2008 (BLS, 2015). Almost half of all fatalities in 2014 were contracted workers (415 workers) working on construction projects, which, 108 of them were laborers, 48 of them were electricians, 44 of them were first line supervisors, 42 of them were roofers, and 25 of them were painters and construction maintenance workers (BLS, 2015). The death rate for construction workers in the U.S seems to be significantly higher than rates around the world (Ringen et al., 1995). Lack of uniform parameters globally makes the comparison complicated. As an example, U.S studies include hazardous material waste cleanup, but European countries usually do not. The German fatality rate does not include structural steel erection (Ringen et al., 1995). The accuracy of this data is often argued. According to Weddle (1997), injury surveillance systems could have problems at either employee level, organizational level, or both. With the first case, the employee should inform his/her employer. If this does not happen, there is no record of it. Second, organizations must accurately record injuries in the Occupational Safety and Health Administration (OSHA) log of work-related injuries and illness in form 300 based on OSHA guidelines. If the data for the logs are not accurate, then it leads to flawed data in the BLS. One of the possible reasons that research about safety gets significant attention among construction firms could be the cost of accidents, both directly and indirectly. Although fatalities and injuries in construction are high compared to other industries (Leigh & Robbins, 2004), there are few cost estimations about injuries and fatalities available (Waehrer et al., 2007). The total cost of fatal and non-fatal injuries was estimated to be around $11.5 billion in 2002, or about $27,000 per case. Injury compensation payment that construction workers receive is about double the amount that workers in other industries receive (Georgine et al., 1997). Generally, construction accident costs range from 7.9% to 15% of the project cost (Everett & Jr, 1996). According to Rikhardsson and Impgaard (2004), the hidden cost of accidents amounts to 35% of total accident cost and hidden costs could vary between 2% to 98% depending on the accident type. According to Rikhardsson and Impgaard (2004), costs associated with each accident can be categorized in three types. Variable costs vary with the number of missed days that the company pays for the lost wages. The second type of cost is a fixed cost. These costs the company for every accident due to administration and communication expenses. The third one is a disturbance cost that depends on the importance of the injured person’s role in the company. The objective of the paper is to review the state of knowledge regarding safety management systems in different organizations as well as introducing active and passive methods of safety performance measurement in construction. Background study and model development of multiple safety management system as well as their elements will be discussed in detail. This paper can be used by practitioners in order to implement safety management system with appropriate methods of measuring the elements in the system. It also can be beneficial for researchers working in related fields.

2. Characteristics of Construction Industry

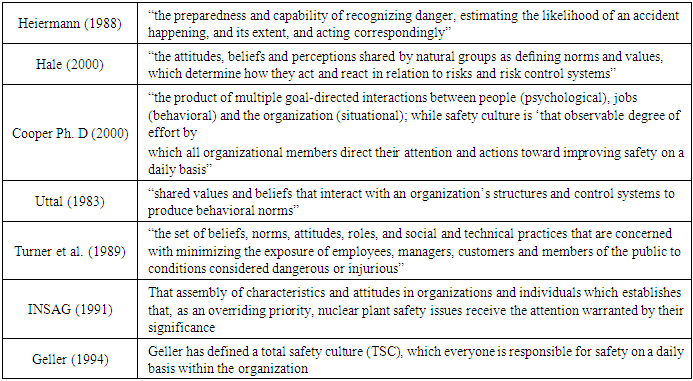

- According to Hallowell (2008), there are three main characteristics in the construction industry that have a direct effect on construction safety. Each characteristic will be discussed briefly:FragmentationOne of the unique features of the construction industry is its fragmentation. The most prevalent delivery method in the U.S has been Design-Bid-Build (DBB). In this method, the constructor is solely responsible for worker safety, and historically, designers have not addressed site safety in their design since they feel that they do not have adequate training (Gambatese, 1998). Modern delivery methods, that designers and constructors work together, could lead to better safety performance (J Hinze, 1997; Hecker et al., 2005). One study shows that there is a difference in project performance as well as safety performance in Design-Build (DB) and Design-Bid-Build projects, of which DB projects had higher safety and project performance (Thomas et al., 2002). Dynamic Work EnvironmentUnlike manufacturing, construction tasks are not repeatable. Each job site has its own characteristics, and makes construction work dynamic. Construction workers do a variety of tasks and their next job could be a completely different construction project. Hallowell (2008) has compared manufacturing and construction in terms of work condition, and findings show that repetition, task predictability, and task standardization are high in manufacturing and low in construction, which could be a reason for differences in fatality rate between these two industries.Safety CultureThe term “safety culture” first was introduced by a post-accident review meeting after the Chernobyl disaster in 1986 (Choudhry et al., 2007). Poor safety culture is recognized as a significant factor in any accident occurrence (Dester & Blockley, 1995). Table 1 outlines a variety of safety culture definitions. Researchers and organizations have defined safety culture differently, yet the basis of all are similar (Cooke & Rousseau, 1988). In addition, all identify safety culture as fundamental for organizations to manage safety aspects of operations (Glendon & Stanton, 2000).

|

3. Why Safety Management?

- The use of safety management systems has been more common in last three decades. Researchers have investigated root causes of accidents on a variety of construction sites (Hinze et al. 1998; Abdelhamid and Everett 200). Some have cited designers, construction managers, and owner’s role in safety (Suraji et al. 2001; Huang and Hinze 2006; Wilson and Kohen 2000; Toole 2002). Based on the literature review, the first step toward a safety management system was to investigate and find the variables related to injuries and fatalities. Researchers have tried not to find just any correlation, but the right causation between the incidents and the variables that have caused those injuries or fatalities. Shannon et al. (1992) investigated 1,032 industrial firms in Ontario (firms with more than 50 workers) based on the lost-time of the firms during two years. Likewise, Habeck et al. (1991) investigated industrial firms in Michigan (firms with more than 50 workers) based on the factor analysis method. Similarly, Hunt et al. (1993) analyzed Michigan firms (firms with more than 100 workers) based on the factor analysis method. Shannon et al. (1997) reviewed and analyzed multiple previous studies (Shannon et al. 1992; Tuohy and Simard 1993; Habeck et al. 1998; Hunt et al. 1993; Cohen et al. 1975; Shafai-Sahrai 1973; Shafai-Sahrai 1977; Chew 1988; Simard et al. 1988; Marchand 1994; Mines 1983) on the relationship between organizational and workplace factors and injury rates.The paper will be divided into two parts; definitions and background of safety management systems and types of safety measurements that will be introduced later.

4. Definition of Safety Management System

- There is no consensus on a definition of safety management systems among organizations and agencies (Robson et al., 2007). According to the Safety Management International Collaboration Group, the definition of a safety management system is “a series of defined, organization-wide process that provides for effective risk-based decision-making related to your daily business” (SMIC, 2010). International Civil Aviation Organization (ICAO) has defined safety management systems as “systematic approach to managing safety, including the necessary organizational structures, accountabilities, policies and procedures” (ICAO, 2007). International Labor Organization (ILO, 2001) has defined safety management systems as “A set of interrelated or interacting elements to establish occupational safety and health policy and objectives, and to achieve those objectives. “

5. Elements of Safety Management Systems in Different Organizations

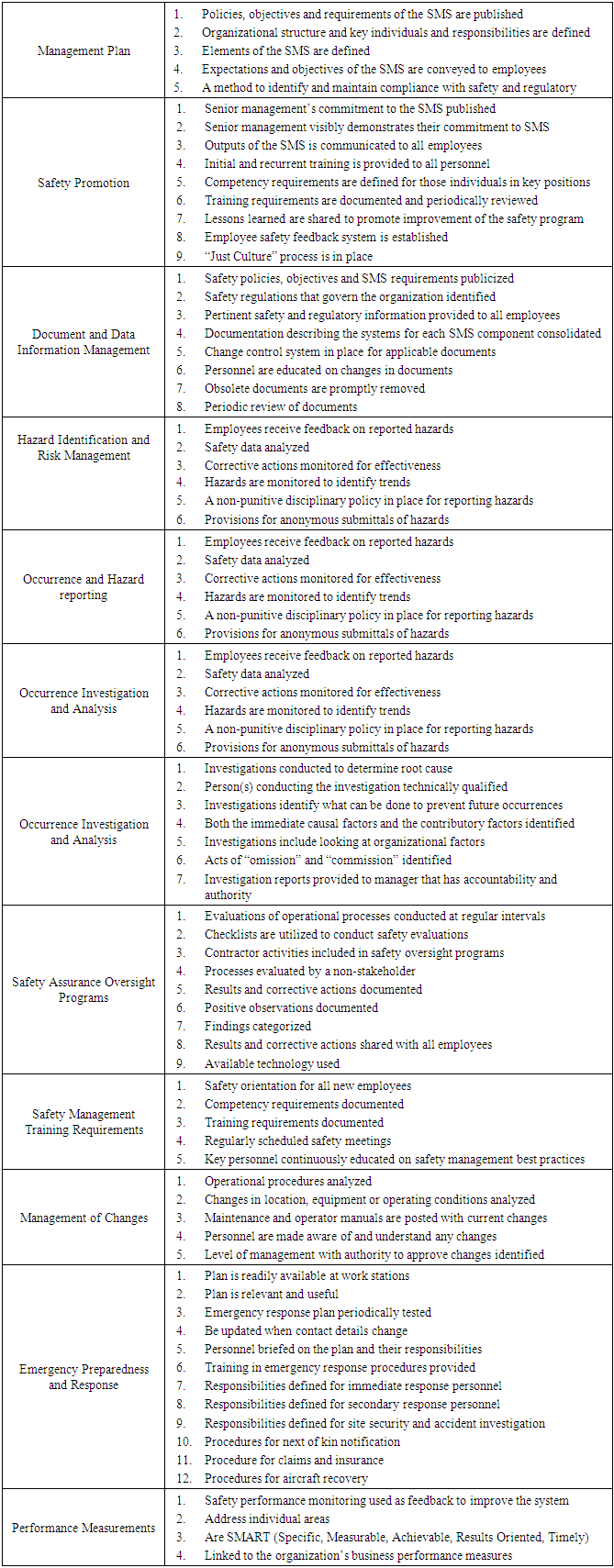

- Each organization has its own definition and elements for implementing safety management systems. There is research that has drawn the elements of a safety management systems by comparing high and low accident companies (Smith et al. 1978; Eyssen et al. 1980; Haber et al. 1990; Zohar 1980). Other research has drawn elements associated with good safety performance based on cases studies of highly reliable organizations (Rochlin 1989; Roberts 1989). Many research projects have drawn elements of good safety management systems by an analysis of accidents (Hurst et al. 1991; McDonald et al. 1994; Powell et al. 1971). Most of the safety management models have been developed by researchers. According to Hale et al. (1997), textbooks on safety management (Heinrich et al. 1980; Ridley 1994) mostly describe the existing guidelines and standards without presenting any model. It should also be noted that, in the past, most studies on safety management have been done by researchers in the fields of psychology, engineering, and sociology (Vaughn 1997; Gehman 2003; Shrivasta 1987; Perrow 1984). This could be a reason for having multiple definitions and approaches toward safety. According to Choudhry et al. (2007), the benefits of a safety management system in the construction industry are: 1. Reducing the number of injuries to personnel and operatives in the workplace through the prevention and control of workplace hazards, 2. Minimizing the risk of major accidents, 3. Controlling workplace risks improve employee morale and enhance productivity, 4. Minimizing production interruptions and reducing material and equipment damage, 5. Reducing the cost of insurance as well as the cost of employee absences,6. Minimizing legal cost of accident litigation, fines, reducing expenditures on emergency supplies. and7. Reducing accident investigation time, supervisors’ time diverted, clerical efforts, and the loss of expertise and experiences” (Choudhry et al., 2007). Generally, safety management system elements consist of three main parts: 1. Administrative management elements, 2. Operational technical elements, and 3. Cultural/behavioral elements. According to Overseas Territories Aviation Circular (OTAR), the broad component of a safety management systems is safety policy, which is a clear statement of the management’s propose, intention, and policies for continuous safety improvement at the organization level (OTAR, 2006). OTAR has introduced six elements for its safety management system. The firs component of a safety management system is objective, which is planning for reaching the goals and proposed method, and can include vision and mission as well. The primary reason for making an objective is motivation for the whole organization. The other component is defining roles and responsibilities. For example, what is senior management’s role in defining safety and health staff, or assigning responsibilities to supervisor and superintendents? The next component of a safety management system is the identification of hazards, and includes initial hazard identification reports and safety assessment. The next element is risk assessment and mitigation that the methods of analyzing risks will be discussed and methods for mitigating risks will be decided. Another component is monitoring and evaluation that could be review, audit, or another method that could be applied for quality assurance. The last element of a safety management system is documentation, and states documenting all the safety management system processes like existing manuals, safety records, permit, and allowance or any other related thing that can be documented should be put on record (OTAR, 2006).The Federal Aviation Administration demonstrates four essential components for developing safety management system. They are safety policy, safety assurance, safety risk management, and safety promotion. They list senior management’s commitment; establishing clear safety objectives; defining methods and processes to meet safety goals under safety policy; and they also put training, communication, system, safety communication of awareness, matching competency requirements to system requirements, and making positive safety culture under safety promotion.The International Helicopter Safety team provides their attributes for a safety management system below (Symposium, 2007):”• Management plan • Safety promotion • Document and data information management • Hazard identification and risk management • Occurrence and hazard reporting • Occurrence investigation and analysis • Safety assurance oversight programs • Safety management training requirements • Management of changes • Emergency preparedness and response • Performance measurement and continuous improvement”Another organization that defines specific elements of a safety management system for itself is Oregon OSHA Safety and Health model that consists of seven elements. This management system has been gained significant attention among industries and academia and also it is a free tool that is available to public in order to improve safety performance. The first element is management commitment. This is important in every safety management system because management’s commitment to protecting their employees.According to Oregon OSHA (2002), there are methods for showing employees that management is serious about creating a safe workplace for the employees, such as setting measurable objectives like organizational financial goals. The other way is assigning safety responsibilities to staff, establishing and maintaining an active way of communication employees feel they can talk about safety in their workplace, and the most important one is showing safety concern at each moment an opportunity is given to talk or act in front of staff and employees. The next element of a safety management system based on Oregon OSHA is accountability. They recommend some methods to improve and strengthen accountability, such as:• “Employee’s written job description clearly state their safety and health responsibilities• Employees have enough authority, education, and training to accomplish their responsibilities• Employees are praised for jobs well done• Employees who behave in ways that could harm them or others are appropriately disciplined” (OSHACADEMY, 2017). The other element is employee involvement and is important for continuous improvement of the program. These are examples of employee involvement based on Oregon OSHA: • “You promote the program, and employees know that you are committed to a safe, healthful workplace.• Employees help you review and improve the program• Employees take safety education and training classes. They can identify hazards and suggest how to eliminate or control them• Employees volunteer to participate on the safety committee” (OSHACADEMY, 2017).The next element is hazard identification and control. This is important because it is needed to identify existing potential dangers and then to identify processes to eliminate or mitigate them. Below are ways to correct and control dangers based on Oregon OSHA:• “An initial danger identification survey• A system for danger identification (such as inspection at regular intervals);• An effective system for employees to report conditions which may be dangerous (such as a safety committee or a safety representative)• An equipment and maintenance program• A system for review or investigation of workplace accidents, injuries and illness• A system for initiating and tracking danger corrective actions• A system for periodically monitoring the place of employment” (OSHACADEMY, 2017)The fifth element for the Safety Management System based on Oregon OSHA is incident/accident analysis. Accidents and incidents should be analyzed for performing better and for continuous improvement of a safety management system. Often, accidents and incidents are preventable. However, due to poor performance and structure of safety management, they could not be prevented. The recommendation for having powerful analysis is to identify the root cause instead of chasing accidents. The other element is educating and training. They consider this element as one of the parameters for continuous improvement. Employees should know about hazards and methods of controlling the situation and steps during emergency periods to reduce the risk and eventually reduce injuries and fatalities. This information is generally obtained through intensive training and education programs within the organization. Training sessions should be held periodically for laborers, supervisors, and management. The last element of a safety management system in Oregon OSHA is reviewing and evaluating the safety program. To do this, one must begin by gathering data and information from previous accidents or near misses and comparing with current data to evaluate the safety program. Reviewing the program periodically is necessary to know if a company is going in the right direction toward safety objectives.According to Petersen (2005), there are nine elements of successful health and safety systems, which the suitable measurement for each item will be discussed below:Management’s Credibility: There are some metrics for measuring management’s credibility like percentage of health and safety meetings that top management attend or percentage of safety objectives that are achieved based on the schedule.Supervisory Performance: The best way to measure this parameter is a perception survey. Beyond that, other metrics include number of weekly, monthly, or daily safety meetings held by supervisors.Employee involvement and participation: There are some metrics to measure this parameter like number of peer observations or number of employees who participate in safety meetings as well as perception surveys and audits that are preferred according to author. Employee training: Similar to the previous parameter the best way to measure this factor is perception survey but besides that some metrics could be mentioned in order to measure this factor like the amount of budget which is assigned for health and safety training or percentage of employees that have special training near the usual training.Employee attitude and Behavior: Metrics that should be considered as methods of measurements are: percentage of participation in any safety observation or percentage of that observation that accomplished on schedule. Communications: Number of safety meetings or effectiveness of rewards are common methods that are being used in various industries. Accident investigation procedures: There are some metric methods to measure this parameter like percentage of accidents that organization could investigate to get root cause or average time from when incident happens till investigation get completed or the average time from incident occurs till that issue get corrected. Hazard Control: Perception survey and audit are best methods for measuring hazard control item but besides that there are metric methods for measuring like number of accidents or incident that demonstrate the failure in planning or percentage of design staff that has some special training in ergonomics.Stress: Some metric methods to measure this item could be average over time or number of safety audits that was carried out.To implement a safety management system, the Insurance Institute for Highway Safety (IHSS) provides elements that are required to implement a safety management system. Table 2 shows the 11 main section and 89 sub elements of this framework (Symposium, 2007):

|

6. Efficacy of Safety Management Systems

- The main objective of developing and implementing safety management systems is to improve safety performance. Bottani et al. (2009) conducted an empirical investigation of safety management systems to see whether the safety performance among adopting and non-adopting firms statistically differs. They collected data from 116 companies from both groups of adopting and non-adopting firms. Five parameters were evaluated; definition of safety and security goals and communication to employees, risk data updating and risk analysis, identification of risks and definition of corrective activeness, and employees training. Industries included in this study were agriculture, manufacturing, building, commercial, and another field. The results of the study conclude that;• Adopters got higher scores regarding define safety and security goals and communicate to employees• Adopters got higher scores with regard update risk data• Adopters earned higher score with regard to assess risks and define corrective actions• Adopter companies have significantly higher score regarding employee trainingThere are multiple studies that have investigated the performance of safety management systems (Vinodkumar and Bhasi 2010; Hobbs and Williamson 2003; Arocena and Nunez 2010; Rosenthat et al. 2006; Hamidi et al. 2012). Most have fully or partially approved the performance of a safety management system. Australian Transportation Safety Bureau has reviewed the effectiveness of a safety management systems (M. J. Thomas, 2012). Australian aviation, marine, and rail industries have implemented safety management system recently. The objective of the study was to assess the efficacy of implementing safety management systems based on research literature. The study concluded that well-implemented safety management systems could enhance safety performance. However, the review demonstrates that there is a lack of research regarding safety management systems in high-risk transport domains. Although safety management systems have been linked to safety outcome based on many researchers and organizations, Gallagher et al. (2003) stated that the connection between safety management system and safety outcome is ambiguous and invalid.

7. Necessity of Safety Performance Measurement

- According to the UK Health and Safety Executive (HSE, 1999), the definition of an accident is “any unplanned event that results in injury, and/or damage and/or loss”. H. W. Heinrich and Granniss (1959) have defined accident as follows: “An accident is an unplanned and uncontrolled event in which the action or reaction of an object, substance, person, or radiation results in personal injury or the probability thereof.” There are a variety of definitions for an accident in different organizations based on the nature of the organization. Researchers have tried to understand accidents. Accident causation models have been introduced to prevent accidents (Abdelhamid & Everett, 2000). There are multiple accident causation models introduced by researchers, but one of the oldest one that was the foundation for the future models was the domino theory (Heinrich, 1936). This theory is aptly named as it represents how each factor can have an impact on another factor for the occurrence of the accident. Heinrich’s model (Heinrich, 1936) has five dominoes: ancestry and social environment, the fault of the person, unsafe acts and/or mechanical or physical hazard, accidents, and injury. Domino Theory was criticized because of oversimplifying the role of human behavior in accidents (Zeller, 1986), over the years, it has been a foundation for developing new models or updating the domino model. As an example, Bird (1974) has updated the domino sequence. Weaver has updated the domino model (Weaver, 1971). There are different accident causation models but are not described in this paper for the sake of brevity.The important question is why should we measure safety performance? There is a famous quote by Peter Drucker that says, “If you can’t measure it, you can’t improve it” There are many answers to this question. According to HSE, measurement is one of the four parts of the plan, do, check and act management system (HSE, 2001). The other motivation for measuring safety performance is to get early warning signs and act rapidly if emergency action is needed. Another reason is that safety measurement could be the input for bonus and incentive programs that is implemented in an organization. Another reason is that measuring can alter future behavior, and thus, it is necessary as a navigational tool. The main purpose of measuring safety performance is to check the current status of safety as well as observing progress with current safety management systems. According to Laufer and Ledbetter (1986), the goal of safety measurement could be described as:• Assessing the effectiveness of safety program at site• Determining the reasons for success and failure• Identifying the location of problem as well as the level of need for remedial effortThere are lots of questions related to the measurement of safety performance including what should we measure? Which kind of measurement tools are needed in the organization? How can we select specific measurement tools for the specific type of industry? Is OSHA recordable the best safety metric? What are advantages and disadvantages of this measurement?

8. Types of Safety Performance Measurement

- There are two types of safety indicators widely used in safety literature, and are “leading” and “lagging” (output measurement, or post-accident measurement) safety indicators. Leading indicators are preferred both in industry and academia.

8.1. Lagging Indicators

- Traditionally, the construction industry has measured safety performance based on lagging indicators such as total recordable injury rate (TRIR) and fatality rate (Hinze et al., 2013). According to Lingard et al. (2013), the reason for the popularity of these methods are that they are:• Easy to collect• Understood easily • Comparable with each other • Used in benchmarking• Useful in the identification of trends Lagging indicators record “after the fact” failures. Since the probability of occurrence of any recordable injuries or fatalities are low, they could not be able to identify any changes in the safety management system (Lingard et al., 2013). Lagging indicators measure the absence of safety rather than the presence of safety (Arezes and Miguel 2003; Lofquist 2010). Sometimes injury rates have been related to other things such as reward systems, bonuses, and manager performance appraisals (Lingard et al., 2013). These rewards can lead to perverse incentives causing employees to underreport (Cadieux et al. 2006; Spaer and Dennerlin 2013). It should be noted that some indicators like near miss reports have been categorized as leading indicator (Toellner, 2011) and lagging indicator (Manuele, 2009). Hinze et al. (2013) have investigated this problem and came up with characteristics for each near miss report. They stated that if near miss report is being implemented in firms as a recordable, then it is considered a lagging indicator. If it is being implemented as a proactive indicator, then it should be categorized as a leading indicator.Prevailing lagging indicators that are being used in a variety of industries are explained briefly below. Calculation of each indicator along with an example for each calculation are also provided for better clarification.

8.1.1. OSHA Recordable Incident Rate (TRIR)

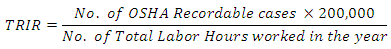

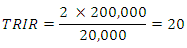

- For many reasons in most organizations, they still use traditional safety measures like OSHA incident rate which is number of incidents per 200,000 man-hours worked in each organization. The other similar measurement introduced by American National Standard Institute (ANSI), is the number of incidents per 1 million man-hours worked. Based on OSHA, injuries that require a physician to treat them and cases where workers become unconscious during his or her job should be considered as recordable injuries. The equation below is from OSHA (2003) that helps calculating recordable incident rate. There is an example afterward for more clarification.

Example- If the company has 10 employees and each work for 2,000 hours annually, the sum of the man-hours worked is 20,000. If the company had two incident records in that year, the IR would be:

Example- If the company has 10 employees and each work for 2,000 hours annually, the sum of the man-hours worked is 20,000. If the company had two incident records in that year, the IR would be: This means that for every 100 employees, 20had recordable injuries. This TRIR is high , largely driven by the size of the organization. When the size of company or organization is bigger, this rate becomes more meaningful.

This means that for every 100 employees, 20had recordable injuries. This TRIR is high , largely driven by the size of the organization. When the size of company or organization is bigger, this rate becomes more meaningful.8.1.2. Lost time Cases (LTC)

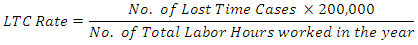

- According to OSHA (2003), any injury or illness that results in the employee being unable to work as assigned work shift is considered as LTC. It should be noted that death is not categorized as LTC. Below is the equation for calculation of LTC.

Example- If the company has 13 employees and each work for 2,000 hours annually, the sum of the man-hours worked is 26,000. If the company had 8 lost time cases, the LTC rate would be:

Example- If the company has 13 employees and each work for 2,000 hours annually, the sum of the man-hours worked is 26,000. If the company had 8 lost time cases, the LTC rate would be: This means that for every 100 employees, more than 61 employees were unable to work for some amount of time because of injuries or illness. Similar to the OSHA recordable incident rate, if the company is large, the rate becomes more meaningful.

This means that for every 100 employees, more than 61 employees were unable to work for some amount of time because of injuries or illness. Similar to the OSHA recordable incident rate, if the company is large, the rate becomes more meaningful. 8.1.3. Lost Work Day Rate (LWD)

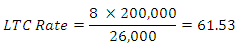

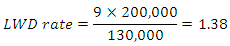

- According to OSHA (2003), Lost Work Day Rate (LWD) calculates the number of lost work days per 100 employees. The equation below shows the calculation of LWD rate:

Example- If the company has 65 employees and each work for 2,000 hours annually, the sum of the man-hours worked is 130,000. If the company had 9 days lost in that year, the LWD rate would be:

Example- If the company has 65 employees and each work for 2,000 hours annually, the sum of the man-hours worked is 130,000. If the company had 9 days lost in that year, the LWD rate would be: This means that for every 100 employees, 1.38 days were lost due to injuries or illnesses.

This means that for every 100 employees, 1.38 days were lost due to injuries or illnesses.8.1.4. Days Away, Restricted or Job Transfer (DART)

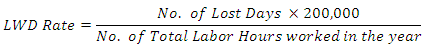

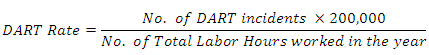

- This rate is an indicator for a number of incidents that had one or more lost day, one or more restricted days, or days that an employee was transferred to different tasks within a company. According to OSHA (2003), below is the equation that shows the calculation of DART rate:

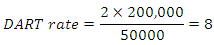

Example- If the company has 25 employees and each work for 2,000 hours annually, the sum of the man-hours worked is 50,000. If the company had one case of a job transfer and one case of injury which resulted in lost time, then the DART rate will be:

Example- If the company has 25 employees and each work for 2,000 hours annually, the sum of the man-hours worked is 50,000. If the company had one case of a job transfer and one case of injury which resulted in lost time, then the DART rate will be: This means that for every 100 employees, 8 incidents resulted in days away, restricted, or job transfer.

This means that for every 100 employees, 8 incidents resulted in days away, restricted, or job transfer. 8.1.5. Experience Modification Rate (EMR)

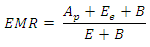

- According to Hinze et al. (1995), workers’ compensation insurance is a significant cost of any construction firms. This indicator is being widely used as an index for past safety performance of any firm. According to Business Round Table (1991), “The experience modification rate is widely used indicator of a contractor’s past safety performance. Owners should request, from prospective contractors, EMRs for the three most recent years, and will show the firm’s trend in safety performance.” According to Hinze et al. (1995), the lower the EMR, the lower the worker’s compensation rate. One of the main disadvantages of using EMR is that it highly depends on the firm size (Garza et al. 1998). As an example, it is possible that safety performance of a large company with better EMR, is not better than a small company with worse EMR (Hinze et al., 1995), and also EMR value is heavily counted on frequency of injuries than severity of injuries. According to Hinze et al. (1995), the equation for calculation of EMR will be:

Where

Where  = actual primary loss;

= actual primary loss;  = expected excess loss;

= expected excess loss;  = expected losses;

= expected losses;  = BallastFor more details about each parameter, Everett and Thompson (1995) have described the EMR in detail. According to Hoonakker et al. (2005), the average score of EMR is 1 and higher scores mean that company is paying more for insurance premium. As an example, EMR of 1.50 means that the company has to pay 50% more insurance premium than average companies. Another rate used to give a company an average of number of lost days per incident is called a severity rate. The severity rate is calculated by dividing number of lost work days by total number of recordable incidents. The result is the average number of days lost for every recordable incident. This rate is used by a few number of companies because it only gives the average number of lost days. OSHA will use recordable incident rates to compare safety performance in specific classification of the company with their past safety performance (RIT, 2017). Some disadvantages of using traditional measurements are (Petersen, 2005):• In smaller organization, the validity of data is low • Does not say that much about if the company is progressing• This kind of measurement does not provide guidance on fixing safety issues• Most of the time they measure luck • Cannot rely on accuracy of data• Significant paperwork and bureaucracy in filing and incident record keeping• Near misses are not reported most of the time• OSHA data are not predictive and cannot predict future incidents based on current data

= BallastFor more details about each parameter, Everett and Thompson (1995) have described the EMR in detail. According to Hoonakker et al. (2005), the average score of EMR is 1 and higher scores mean that company is paying more for insurance premium. As an example, EMR of 1.50 means that the company has to pay 50% more insurance premium than average companies. Another rate used to give a company an average of number of lost days per incident is called a severity rate. The severity rate is calculated by dividing number of lost work days by total number of recordable incidents. The result is the average number of days lost for every recordable incident. This rate is used by a few number of companies because it only gives the average number of lost days. OSHA will use recordable incident rates to compare safety performance in specific classification of the company with their past safety performance (RIT, 2017). Some disadvantages of using traditional measurements are (Petersen, 2005):• In smaller organization, the validity of data is low • Does not say that much about if the company is progressing• This kind of measurement does not provide guidance on fixing safety issues• Most of the time they measure luck • Cannot rely on accuracy of data• Significant paperwork and bureaucracy in filing and incident record keeping• Near misses are not reported most of the time• OSHA data are not predictive and cannot predict future incidents based on current data8.2. Leading Indictors

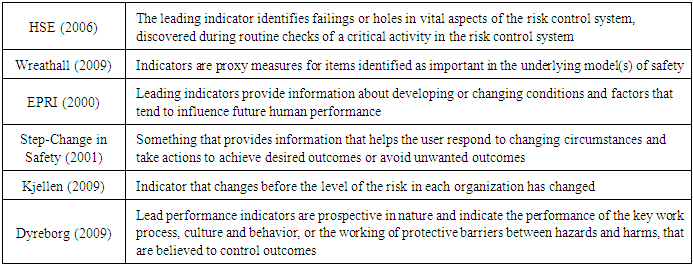

- There are a variety of definitions for leading indicators. These have been gathered from researchers and organizations and reported in Table 3.

|

9. Discussion

- As mentioned in the Introduction, the primary objective of this paper is to review the state of knowledge regarding safety management systems. The outcome of this review paper shows that although there are variety of safety management systems being used in industries, their foundations are similar. One differing feature of many safety management systems was in the terminology used for their categories. One of the primary contributions of this review paper is to gather essential safety management system parameters and related safety performance measurements to increase awareness for researchers and practitioners. Additional effort discussed the practicality of these systems in the construction industry.

10. Conclusions

- The aim of this review paper was to summarize the current state of knowledge regarding safety management systems based on previous research. The paper was divided into two parts; definitions and background of safety management systems and types of safety measurements that have been introduced. Although most of the components of a safety management system discussed were similar, the foundation of each is different. Some models have been developed based on only high reliable organizations, and some have been developed through analyzing accidents. Lagging indicators and calculations of most prevalent methods were discussed in detail. Conversely, the use of leading indicators increased recently based on the number of organizations that have implemented them as well as the number of studies focusing on them. In addition to safety management system components, the paper has gathered both proactive and passive indicators suitable for every safety management system. With the summarized findings of this manuscript, practitioners can be beneficial by applying available safety management systems mentioned in this paper, as well as various methods of safety measurement. This paper provides both past and current studies regarding variety of safety management system and safety performance measurements, which can be beneficial for researchers in a variety of fields.

Abstract

Abstract Reference

Reference Full-Text PDF

Full-Text PDF Full-text HTML

Full-text HTML