-

Paper Information

- Paper Submission

-

Journal Information

- About This Journal

- Editorial Board

- Current Issue

- Archive

- Author Guidelines

- Contact Us

American Journal of Biomedical Engineering

p-ISSN: 2163-1050 e-ISSN: 2163-1077

2016; 6(5): 147-158

doi:10.5923/j.ajbe.20160605.03

PCA-SVM Algorithm for Classification of Skeletal Data-Based Eigen Postures

Nguyen Thanh Hai1, Trinh Hoai An2

1Faculty of Electrical and Electronics Engineering, HCMC University of Technology and Education, Vietnam

2Faculty of Electronics – Telecommunications, Saigon University, Vietnam

Correspondence to: Nguyen Thanh Hai, Faculty of Electrical and Electronics Engineering, HCMC University of Technology and Education, Vietnam.

| Email: |  |

Copyright © 2016 Scientific & Academic Publishing. All Rights Reserved.

This work is licensed under the Creative Commons Attribution International License (CC BY).

http://creativecommons.org/licenses/by/4.0/

Falls are the major reason of serious injury and dangerous accident for elderly people. A recognition system is necessary to recognize falls early for help and treatment. In recent years, researchers have been developing many new methods of effective detection and recognition of human falls. In this paper, three subjects were introduced a system of fall recognition with five pairs of human postures (non-fall-fall, fall-stand, fall-sit, fall-bend, fall-lying) using a Kinect camera system. Features of skeletaldata with the human postures obtained from the camera system are extracted using a PCA algorithm. For fall recognition and sending notification message, a SVM algorithm is applied for training the feature data and classifying these postures. Experimental results show that the high effectiveness of the proposed approach for fall recognition and alert is nearly 82%.

Keywords: Skeletal data acquisition, PCA method, Fall recognition and alert, SVM algorithm

Cite this paper: Nguyen Thanh Hai, Trinh Hoai An, PCA-SVM Algorithm for Classification of Skeletal Data-Based Eigen Postures, American Journal of Biomedical Engineering, Vol. 6 No. 5, 2016, pp. 147-158. doi: 10.5923/j.ajbe.20160605.03.

Article Outline

1. Introduction

- Fall injuries are dangerous and often happen for older people. These injuries can be burdens to individuals, their families and healthcare systems [1]. According to the Canada’s Public Health Agency in 2014 [2], the rate of old people was hospitalized by fall increasingly, particularly there was 0.4% - 0.6% people at the age 65-69, who went to the hospital every year. It was rising 4.9% - 6.8% people at the age over 90. Fall and related fall injuries are some of causes leading to the death rate of people in the world. Therefore, the earlier fall recognition of elderly people is necessary and will increase their living opportunity. With the development of high technology, the automatic system with alarm is a necessary solution for fall recognition. Many researches have used modern devices to monitor the human activities in recent years. These devices can recognize fall and then generate the alarm signals to relatives and medical staff for emergency support [2]. Based on features of fall, a method for detection and notification of fall has been constructed. The most importance of a system is accurate distinction between normal and fall activities in the series of daily activities of the elderly people in the indoor environment. The detection system consists of three main basic methods: a camera system [3, 4], environmental sensors [5] and wearable sensors [6, 7].In a system, environmental sensors are often used to collect surrounding activities in the established environment and produce corresponding signals. In practice, there are types of sensors as infrared, vibration, laser, pressure and others are used in exploring changes of the environment. The passive system was designed using vibration sensors in seeking and recognizing changes of vibration in the floor [8, 9]. With this design, the system recognized the floor vibration and can distinguish between fall and other postures. Therefore, the systems with this type sensor were developed to find out the fall status of victims being fainted or strained based on spectrum.Wearable sensors such as acceleration, gyroscope were usually used to install with human body for determination of tilt angles related to recognition of human postures. In order to perform recognition systems with these wearable sensors, two methods such as threshold method and machine learning algorithm were applied [10]. In particular, acceleration sensors were used in the system to measure problems related to chest of human. Therefore, signals obtained from the sensors were transmitted to computer for processing and then recognizing human motion states (fall or normal state) based on threshold method [11]. Experimental results of this research is that the accuracy with normal and fall cases is high, particularly the standing state is approximate of 100%, the going state is about 100% and two cases with under 95% accuracy are falling back without lying and falling left. The advantages of this system are small and easy to move.With computer vision systems, cameras are often used to set up at some fixed positions in house or room to supervise activities of elderly people. Image processing is applied to calculate image data and then identify human postures. A Moving Average (MA) method in the system with the cameras was employed to detect fall states [12]. In particular, this approach allows detecting fall states base on depth map and normal color information [13]. Moreover, History Moving Image (HMI) was utilized to classify collected data to find moving state. The HMI features were extracted for searching output movements based on RGB depth maps. For recognition of fall, a Support Vector Machine (SVM) algorithm was applied to identify fall states using a camera system with RGB-Depth [14].In recent years, machine learning method has been employed for recognition of many problem related to image data. In particular, for recognition of human gestures (standing, siting and lying), three methods such as the SVM, decision tree, Navie Bayes were utilized [15]. The results showed that the SVM method had the accuracy of 100%, the decision tree gave the 90% accuracy and the Navie Bayes was about 85%. Thus, the SVM algorithm is the most accuracy compared to the two remaining methods.The Kinect camera system has been developed to use in many applications in recent years. In an application of fall detection, the system was installed with a Kinect camera and an accelerometer sensor to increase the accuracy of recognition. Therefore, methods of recognition such as the SVM, the Upper Fall Threshold (UFT), the Lower Fall Threshold (LFT) algorithm were applied. The final result showed that the recognition using the SVM with depth data combined to accelerate features has the higher accuracy than that using others [16].The purpose of this research is to establish a system of fall recognition with a Kinect camera and warning for elderly people. Data obtained are fall and normal postures. For classification of postures, a Principle Component Analysis (PCA) algorithm will be applied to extract features of data. Thus, a Support Vector Machine (SVM) algorithm will be employed for classification of fall and non-fall. In addition, a warning system in the recognition will be designed to send a mobile message for warning to relatives. Experimental results will be shown so that the effectiveness of the proposed approach.

2. Data Acquisition and Materials

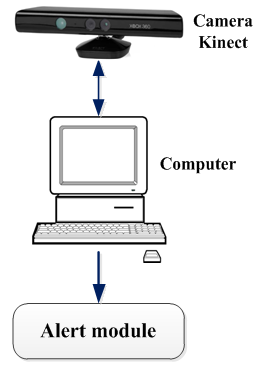

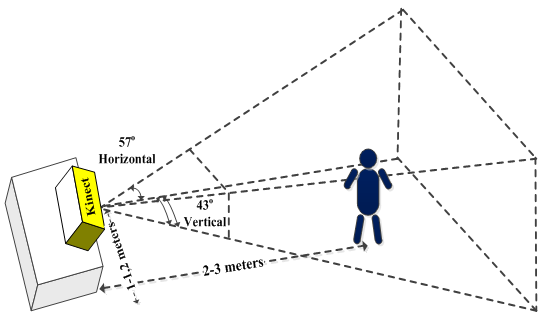

- In this research, a fall recognition system for elderly people has three main parts: data acquisition from the Kinect camera, a computer for processing data and fall recognition and an alarm module as shown in Figure 1. This Kinect camera system, which has a camera and a laser sensor, can produce skeletal data with bone joints. It means that the skeletal data obtained have three axes, in which there is depth information. In particular, the depth axis can allow determining distance from the camera center to an object. In addition, this camera system produces the type of these skeletal data with three axes which can be easier for detection of human motions as well as fall recognition.

| Figure 1. System of fall recognition and alert |

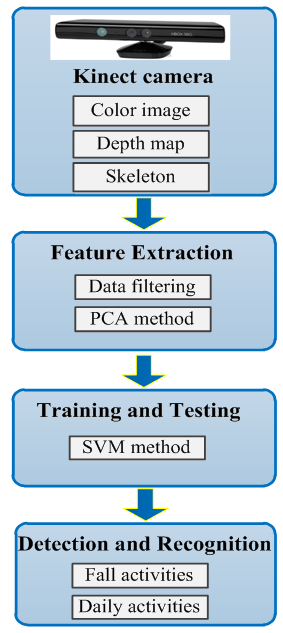

| Figure 2. Block diagram of the recognition system |

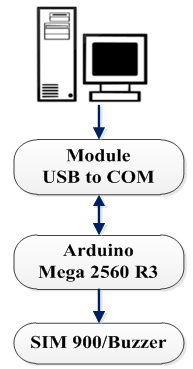

| Figure 3. Block diagram of an alarm system |

3. Kinect Data

3.1. Skeleton of Kinect System

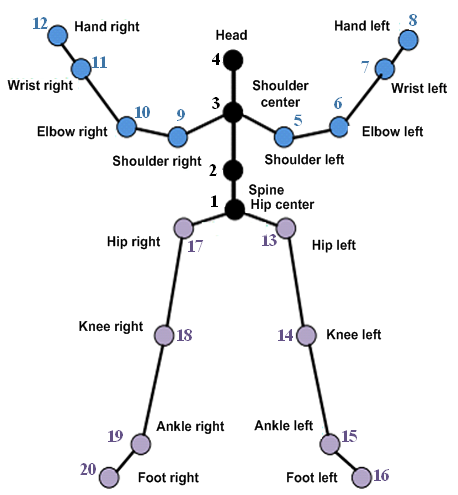

| Figure 4. Diagram of twenty body- joint positions |

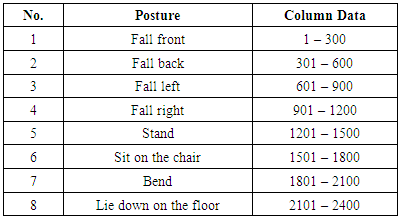

3.2. Data Acquisition

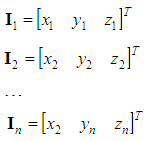

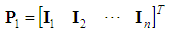

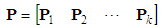

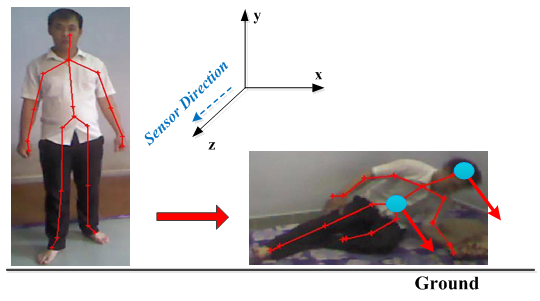

- In this paper, a Kinect camera system was installed at a fixed position in a room and three subjects, including two males, one female were introduced to perform experiments with different postures. The subjects invited have the age of 19-26 years old and the weights are between 51-75 kg. Moreover, the subjects were instructed to work out eight postures, including “fall front”, “fall back”, “fall right”, “fall left”, “stand”, “sit” on the chair, “bend”, lie down” on the floor.In this recognition system, the Kinect camera is fixed at an appropriate position corresponding to the region of view with the 1.2 m height and the 3 m depth for collection of 3D skeletal data as shown in Figure 5. In particular, each 3D data of human bone joints obtained from the camera denotes with three coordinates (x, y, z), called (Horizontal, Vertical, Depth) for a joint position.

| Figure 5. Schematic of the Kinect camera for collection of 3D image |

| (1) |

| (2) |

| (3) |

| (4) |

| Figure 6. Description of data, p, arranged to be a column vector |

| Figure 7. Illustrating activities of human falls |

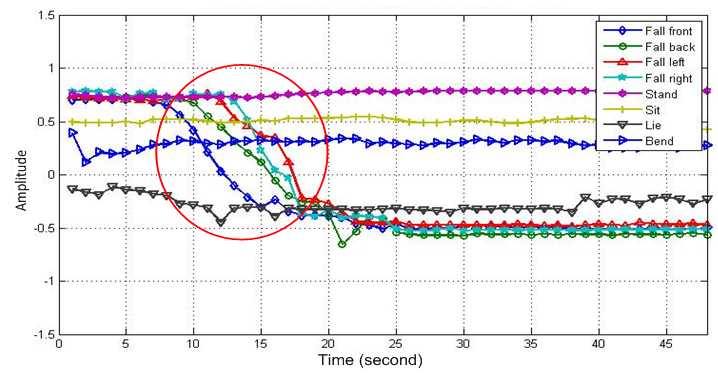

| Figure 8. Y-axis coordinates of the head joints between fall and non-fall states |

|

4. Methodology

4.1. Feature Extraction using PCA Algorithm

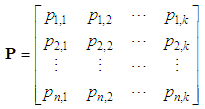

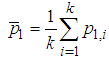

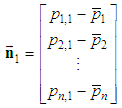

- A Principal Component Analysis (PCA) algorithm is applied to extract feature components of fall data. In particular, skeletal data of fall and non-fall postures are pre-processed to produce the data matrices. Thus, the average value

, with i=1,2,…,k is determined from the row components of the matrix P in Eq. (4) and this equation is expressed as follows:

, with i=1,2,…,k is determined from the row components of the matrix P in Eq. (4) and this equation is expressed as follows:  | (5) |

. Next step of this PCA algorithm is that the Standard Deviation (SD) will allow to calculate vectors as follows:

. Next step of this PCA algorithm is that the Standard Deviation (SD) will allow to calculate vectors as follows:  | (6) |

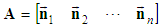

. From the set of the vectors,

. From the set of the vectors,  , a matrix A is built as follows:

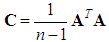

, a matrix A is built as follows: | (6) |

| (7) |

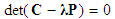

| (8) |

| (9) |

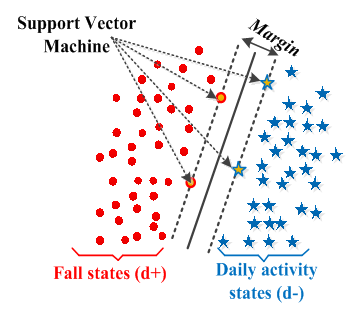

4.2. Support Vector Machine Algorithm

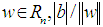

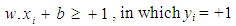

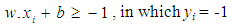

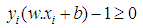

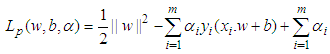

- In this paper, a SVM method is utilized in the system of fall recognition to classify between two postures of fall and normal activities of elderly people. From this classification, one can recognize fall state. After feature data of postures are determined using the PCA, the SVM algorithm is applied to train the PCA feature data and classify states of fall and non-fall. In the SVM algorithm, the linear hyperplane is an area to divide the data set into two subsets collection according to the linear hyperplane. Assume that pattern elements (datasets after the PCA) are expressed as follows:

| (10) |

while

while  is subclass of xi .Therefore, to identify the hyperplane, one needs to find a plane making Euclidean distance between two layers as shown in Figure 9. In particular, a vector with the distance values close to the plane is called the support vector, in which, the positive value is y = +1 or H1 and the negative one is in the region of y = -1 or H2.

is subclass of xi .Therefore, to identify the hyperplane, one needs to find a plane making Euclidean distance between two layers as shown in Figure 9. In particular, a vector with the distance values close to the plane is called the support vector, in which, the positive value is y = +1 or H1 and the negative one is in the region of y = -1 or H2.  | Figure 9. Description of the SVM algorithm with hyperplane |

| (11) |

, where w is the vector with perpendicular points to the separating hyperplane and with

, where w is the vector with perpendicular points to the separating hyperplane and with  is distance from this hyper plane to the origin, and ||w|| is the magnitude of w.Moreover, d+ (d-) is the shortest distance from the hyper-boundary to positive (negative) samples. Therefore, margin of plane is d+ + (d-) in the linear case and the support vector looks for the separating hyper plane with the largest margin using the primal Lagrangian. Suppose that all training data satisfy the following constraints:

is distance from this hyper plane to the origin, and ||w|| is the magnitude of w.Moreover, d+ (d-) is the shortest distance from the hyper-boundary to positive (negative) samples. Therefore, margin of plane is d+ + (d-) in the linear case and the support vector looks for the separating hyper plane with the largest margin using the primal Lagrangian. Suppose that all training data satisfy the following constraints: | (12) |

| (13) |

| (14) |

| (15) |

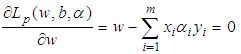

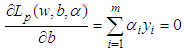

is the Lagrange multiplier. Taking the derivation w of Lp, the equation is calculated as follows:

is the Lagrange multiplier. Taking the derivation w of Lp, the equation is calculated as follows: | (16) |

| (17) |

| (18a) |

| (18b) |

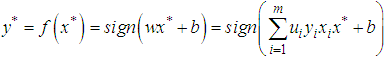

coefficients. After training,

coefficients. After training,  is the support vectors and located on one of the two hyperplanes, H1 and H2. If the result of data classified has the value x*, its subclass is of x* (-1 or +1) and is described through the following formula:

is the support vectors and located on one of the two hyperplanes, H1 and H2. If the result of data classified has the value x*, its subclass is of x* (-1 or +1) and is described through the following formula: | (19) |

5. Results and Discussion

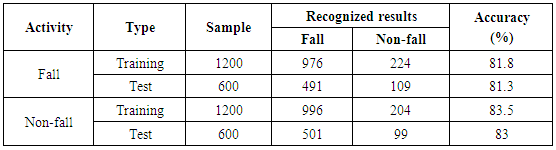

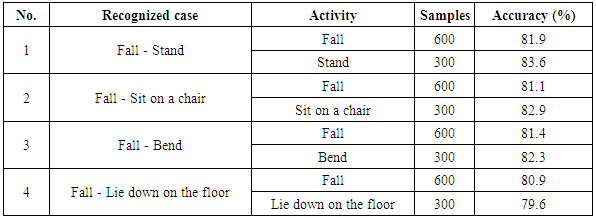

- In the experiments, offline databases were used for feature extraction using the PCA algorithm and then these features were trained for classification using the SVM. Experimental results were shown to illustrate the effectiveness of the proposed method in the fall recognition system.

5.1. Representation of Fall and Non-Fall Postures

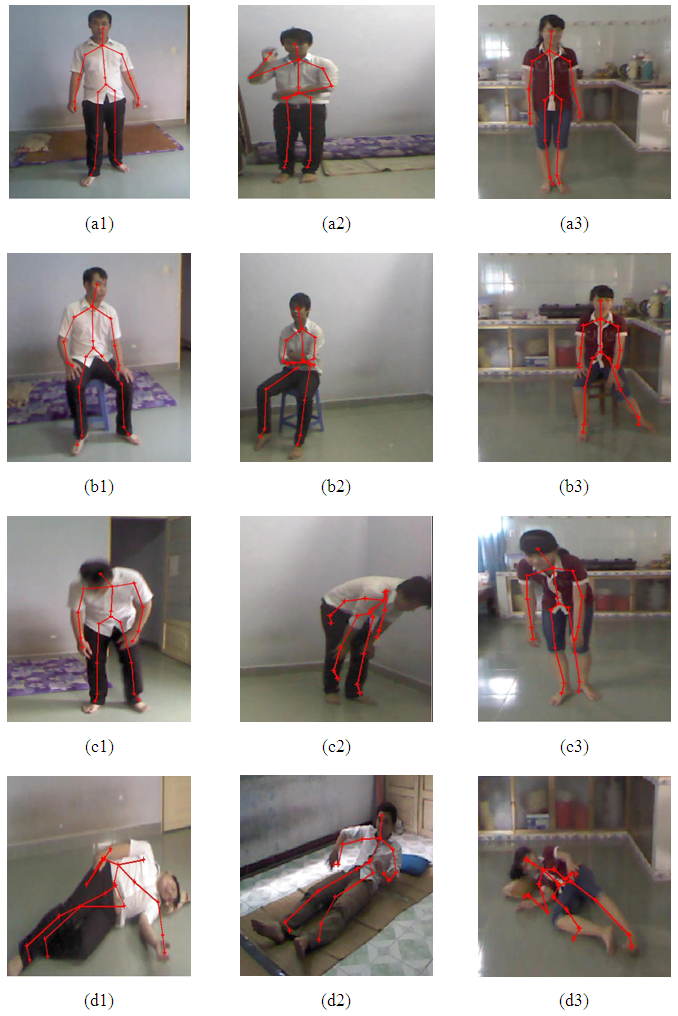

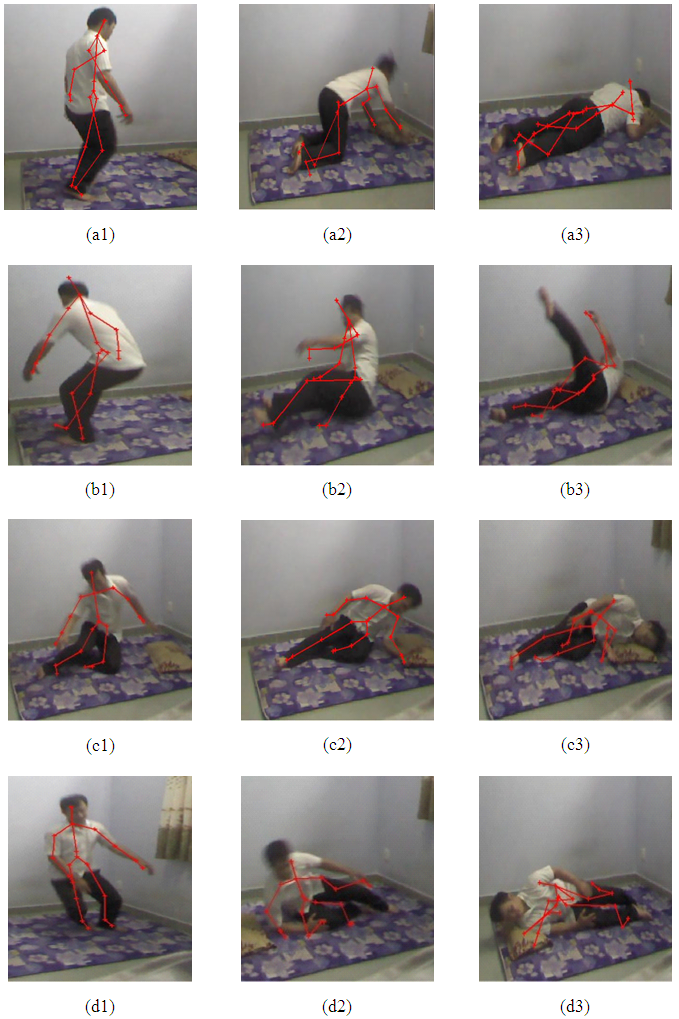

- In this research, three subjects were invited to attend experiments and introduced to well understand the operation of the fall recognition system. Therefore, every subject was instructed to perform different activities and one activity was worked out ten times of eight postures. In particular, these subjects were also instructed to move their postures such as “Stand”, “Sit”, “Bend”, “lying” and “fall”, in which the fall state has four falling postures (forward, backward, left and right). Therefore, the data were collected on three subjects with different postures from the Kinect camera, in which they can be arranged into four types of states: “Stand”, “Bend”, “Lying” and “Sit”.

| Figure 10. Images of non-fall postures: (a1), (a2), (a3)_ “Stand”; (b1), (b2), (b3)_“Sit on a chair”; (c1), (c2), (c3)_ “Bend”; (d1), (d2), (d3)_ “Lie down on the floor” |

| Figure 11. Images of fall postures: (a1), (a2), (a3)_“Fall front”; (b1), (b2), (b3)_“ Fall back”; (c1), (c2), (c3)_ “Fall left”; (d1), (d2), (d3)_ “Fall right” |

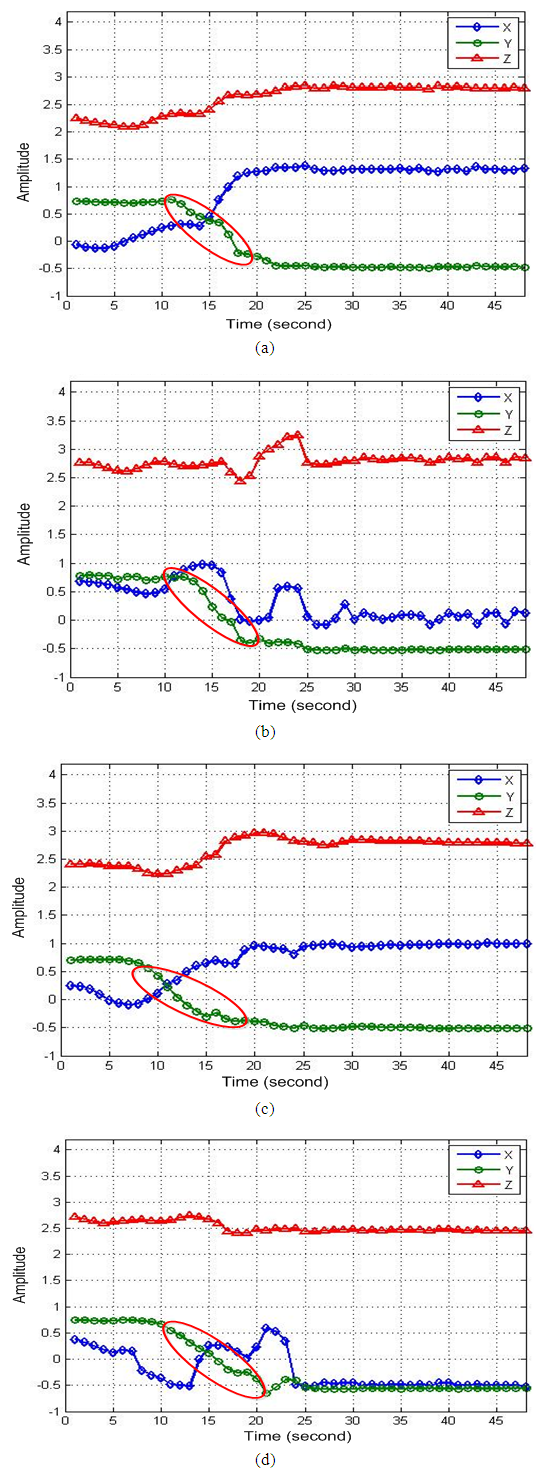

| Figure 12. Representation of four fall states related to head joints: (a) Fall forward. (b) Fall backward. (c) Fall to left. (d) Fall to right |

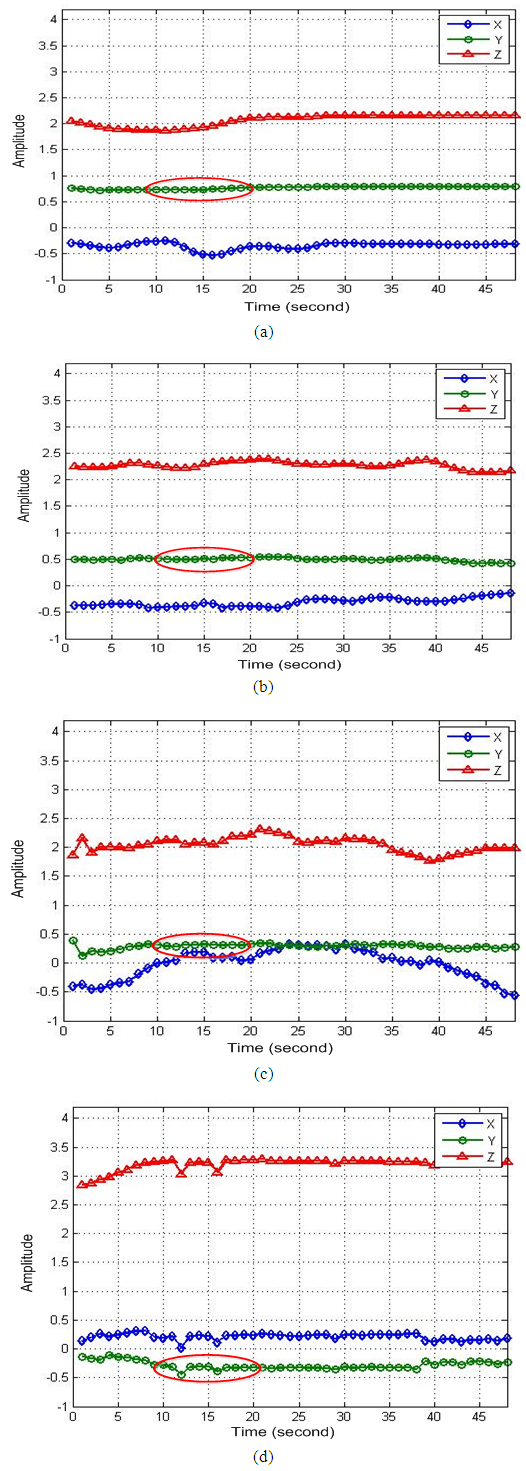

| Figure 13. Representation of four non-fall states related to head joints: (a) Stand. (b) Sit on a chair. (c) Bend. (d) Lie down on the floor |

5.2. Fall Recognition Using the SVM

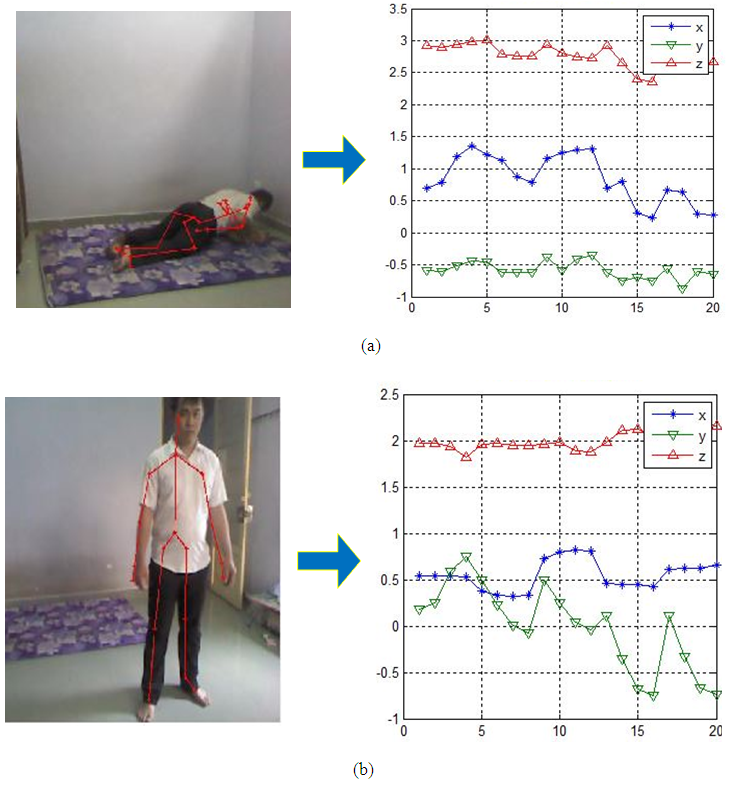

- This research represents experimental results corresponding to human postures for recognition of fall and non-fall as shown in Figure 14. In particular, this figure shows two postures, one for fall on floor and another one for standing state corresponding to three skeletal data lines (red, green and blue).

| Figure 14. Representation posture images corresponding to data lines: (a) Fall posture and simulation result; (b) Non-fall posture and simulation result |

|

|

5.3. Alert System for Falling

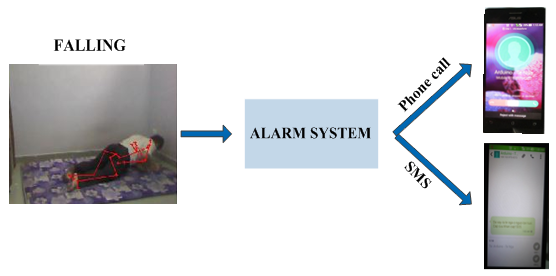

- In this research, an alert system was designed for transferring message to subject’s relatives when it recognizes a fall of an elderly person as shown in Figure 15. In particular, the notification messages were installed available in the system for sending to any a mobile phone for an announcement and a SMS. Thus, the mobile phone can receive the message with the content of "Falling. Emergency! Help".

| Figure 15. Description of the fall system with notification message and calls |

6. Conclusions

- In this research, the skeletal data of three subjects with eight postures obtained from a Kinect system to be converted into images. These image data were extracted features using a PCA method. For fall posture classification, a SVM algorithm was employed for training the image data set and then for classifying fall and non-fall postures. Experimental results showed that, the average accuracy between fall and non-fall is 81.8% and 83.5%, respectively. In addition, the fall recognition could send message to relatives through mobile phones. With these effective results of classification using the PCA-SVM algorithm, it will be suitable to remain for future relevant studies.

ACKNOWLEDGEMENTS

- The authors would like to acknowledge the support of HCMC University of Technology and Education, Vietnam, with Grand No. T2016 – 44TĐ. Moreover, we would send to thank students and colleagues for this research.

Abstract

Abstract Reference

Reference Full-Text PDF

Full-Text PDF Full-text HTML

Full-text HTML